THE CENTER OF GRAVITY FOR ENTREPRENEURS IN TEXAS

Austin Deep Learning

# Turn 2D photos into 3D objects!

Speakers: Hassan Hayat

The use of Computer Graphics is everywhere in modern society: from movies to video games to physics simulations to automobile engineering. Despite all the incredible advances in computer graphics in creating life-like scenes, it remains incredibly costly to create photorealistic models, often relying on large teams of 3D artists. If Computer Vision turns images into semantic representations, Computer Graphics can be seen as the inverse process by taking semantic “scene descriptions” and producing images. This talk will introduce “Differentiable Rendering”, a method that bridges computer vision and graphics, as well as “NeRF” (Neural Radiance Fields), a deep learning model that can generate a 3D model from a few input images from different angles.

About the speaker: Hassan is the founder of HeyBot AI, an avatar-driven chatbot service, and has 6+ years experience developing prediction models for Private Equity due diligence and value creation. He is also passionate about computer graphics and deep learning and is working to make machines feel more human.

Our Sponsors:

Weights & Biases

Think of W&B like GitHub for machine learning models. With a few lines of code, **save everything you need to debug, compare and reproduce your models** — architecture, hyperparameters, git commits, model weights, GPU usage, and even datasets and predictions.

W&B is excited to sponsor Austin Deep Learning and help local ML communities to connect, exchange, collaborate and discuss the latest in ML. Making research easier, more explainable, and more reproducible is a big reason we founded our company in the first place! You can use Weights & Biases for free and start tracking and visualizing models in 5 minutes, then collaborate in teams and document your findings in reports!

Used by top researchers including teams at Qualcomm, NVIDIA, OpenAI, Lyft, Pfizer, Toyota, Github, and MILA, W&B is part of the new standard of best practices for machine learning.

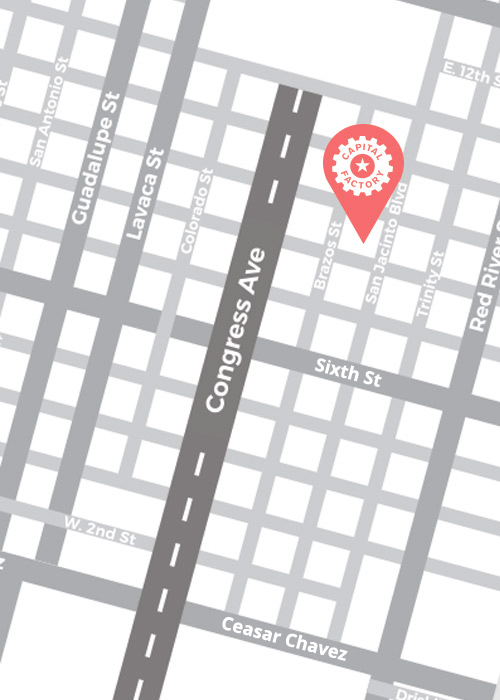

By Appointment Only

Our doors are open! Reach out to Members@CapitalFactory.com to book your private, in-person membership tour.