THE CENTER OF GRAVITY FOR ENTREPRENEURS IN TEXAS

Austin Deep Learning – Do Neural Networks Overthink?

The decision-making process of conventional

DNNs—the forward pass—requires the same computational effort on all inputs, whether they are simple or difficult to classify. This begs a question: Are deep neural networks susceptible to overthinking? Analogously to human overthinking, we hypothesize that further computation after earlier layers for simple data instances leads to waste of power and also may lead to misclassification. Our definition of overthinking relates to how a prediction evolves throughout the forward pass. To solve the overthinking problems in DNNs, we propose the new paradigm known as Dynamic Neural Networks.

About the speaker:

Sunny Sanyal is a PhD student at UT Austin studying in Electrical and Computer Engineering. He is also a researcher working on problems like dynamical neural networks and self supervised learning.

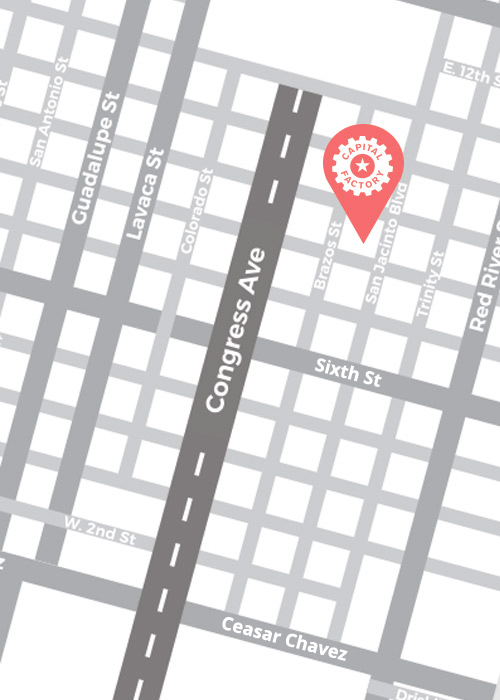

By Appointment Only

Our doors are open! Reach out to Members@CapitalFactory.com to book your private, in-person membership tour.